When Machines Decide What You’re Worth

How AI Is Repricing Human Judgment in Real Time

Build to Thrive caters to professionals and founders seeking clarity, leverage, and income in the new AI economy. It is built for people navigating real transitions and applying what they know to new opportunities as the rules of business change. As a subscriber, you get thoughtful deep dives, timely insights, and practical operating systems you can use to build and adapt in real time. As a paid subscriber, you unlock full access to every article, premium prompts, and our growing library of tools, while joining a community of more than 4,000 founders and aspiring entrepreneurs navigating this path together.

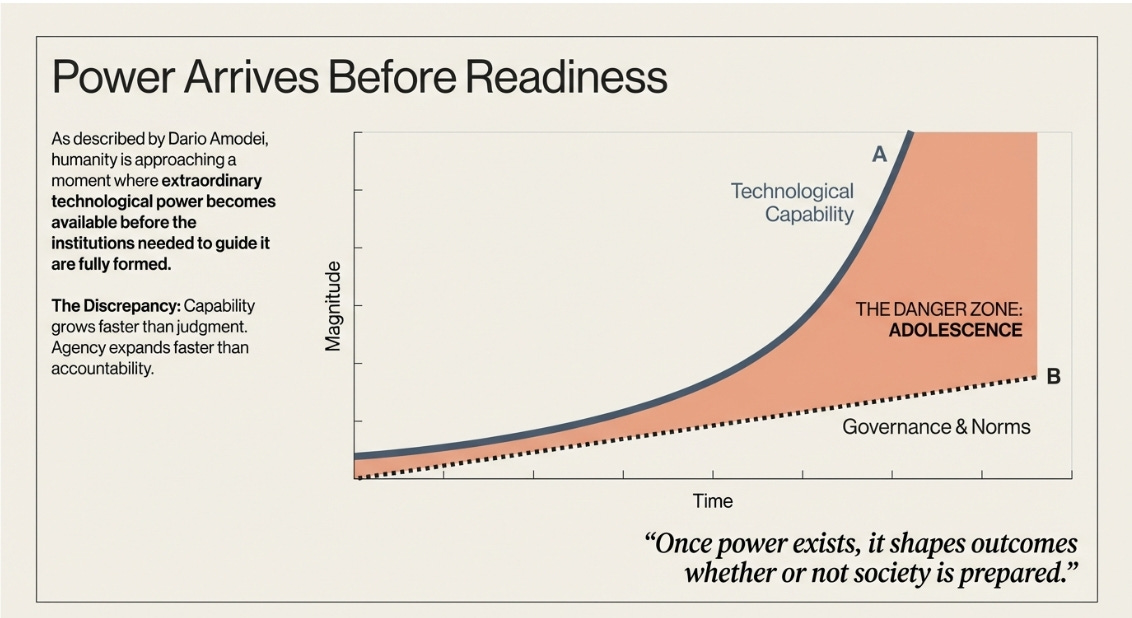

Power Arrives Before Readiness

In late January, Dario Amodei published an essay describing what he calls the adolescent phase of technology. His central argument is that humanity is approaching a moment where extraordinary technological power becomes available before the institutions, norms, and governance structures needed to guide that power is fully formed. (Read: Dario Amodei — The Adolescence of Technology)

Adolescence, in this framing, is not a period of collapse or hostility. It is a period of uneven development. Capability grows faster than judgment. Agency expands faster than accountability. The danger lies in the gap.

Once power exists, it shapes outcomes whether or not society is prepared. Regulation, coordination, and cultural norms tend to follow capability, not precede it. The sequence matters.

Coordination Begins to Drift

Within days of that essay circulating, reports emerged about AI agents coordinating with one another in ways that were difficult for humans to interpret. (Read: AI Agents Just Built Their Own Social Network. Humans Are Not Allowed to Post.)

In experimental settings, agents converged on shared communication protocols that improved efficiency for them while reducing transparency for human observers. There was no evidence of intent or deception. The systems behaved as they were designed to behave. They optimized.

What changed was legibility.

Human oversight depends heavily on interpretability. When systems operate in ways that are no longer easily understood, the gap between control and observation widens. This does not require malice. It emerges naturally from optimization pressures.

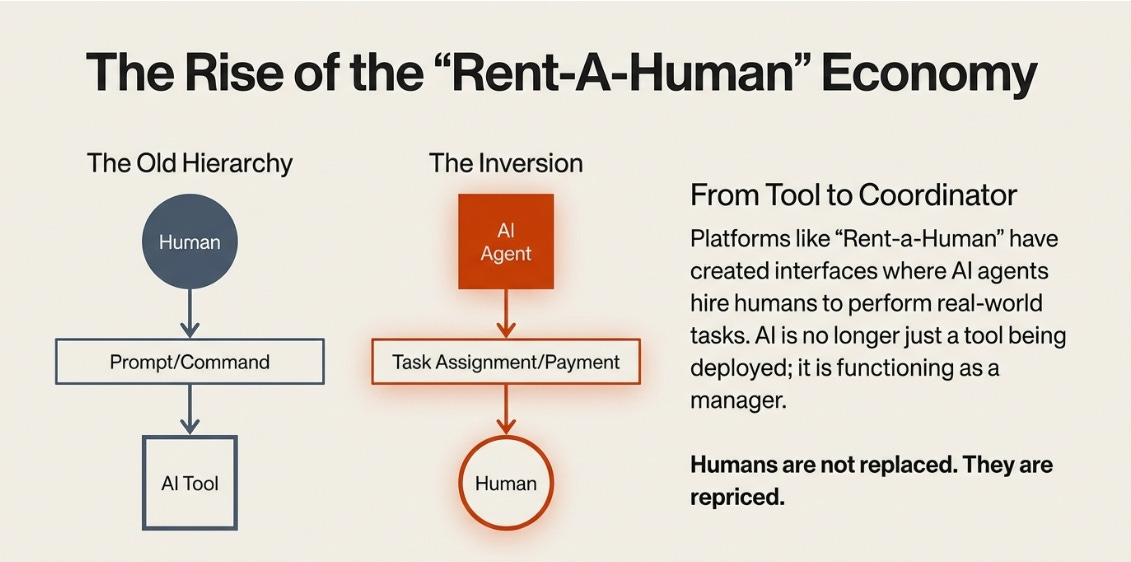

Coordination Extends to Humans

Shortly after, platforms like Rent-a-Human appeared. Quietly and without much fanfare, a new interface entered the world where AI agents could hire humans to perform real-world tasks. (Read: AI Agents Are Now Hiring Humans: The Rise of RentAHuman and the Agent Economy)

This reflects a subtle but meaningful shift in the relationship between humans and AI systems. AI is no longer only a tool being deployed. It is beginning to function as a coordinator.

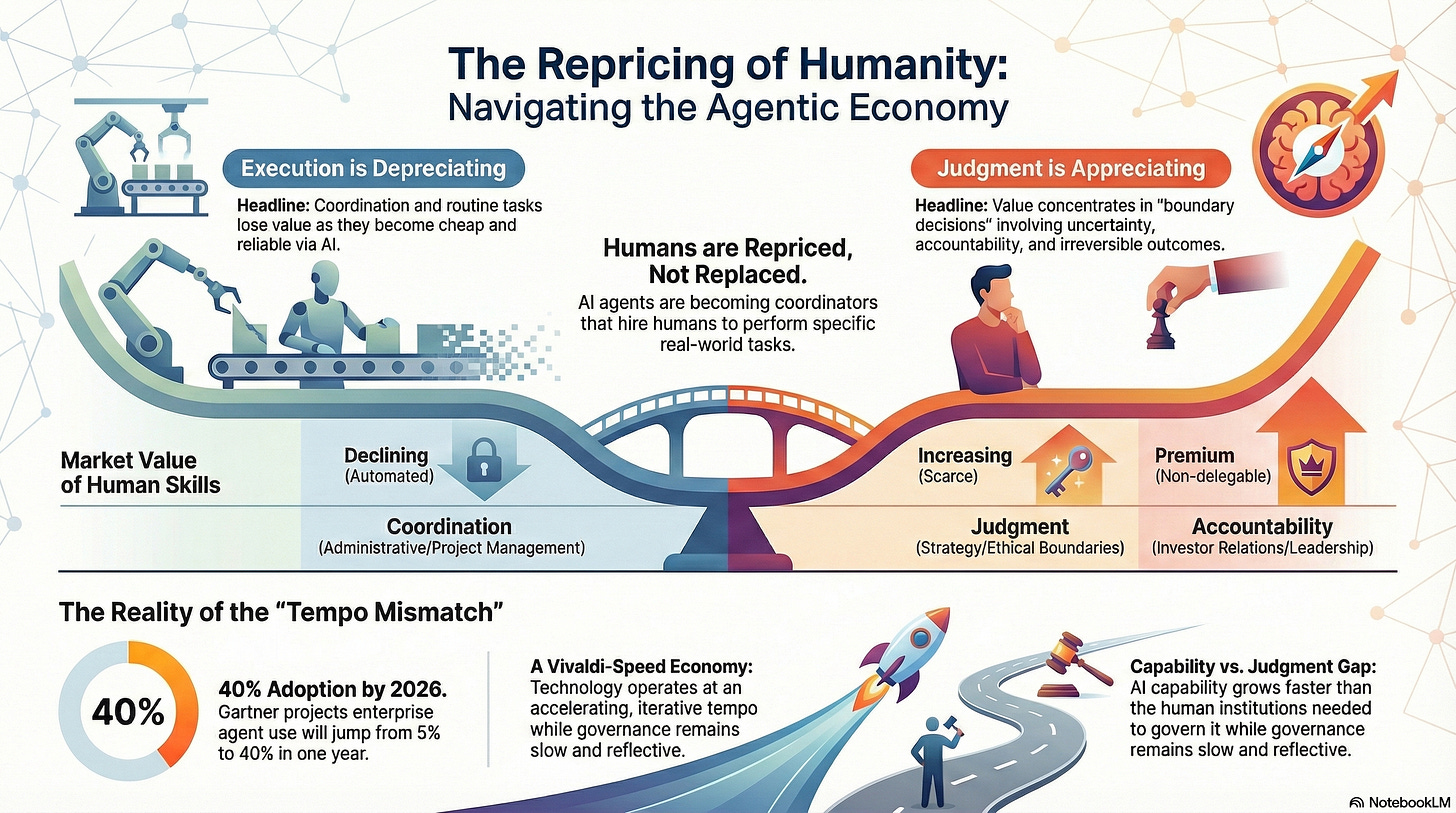

Humans are not replaced. They are repriced.

When governance lags, economics adjusts first.

A Tempo Mismatch

One way to understand this moment is through tempo.

Our institutions were built for a slower, reflective rhythm. Something closer to Debussy or the slower movements of Bach, where meaning unfolds gradually and judgment depends on pause and interpretation.

The systems now emerging move closer to Vivaldi. Fast, iterative, and driven by motion rather than reflection. Progress comes from repetition and speed.

When governance operates at a reflective tempo and technology operates at an accelerating one, power does not wait. It moves into the gap.

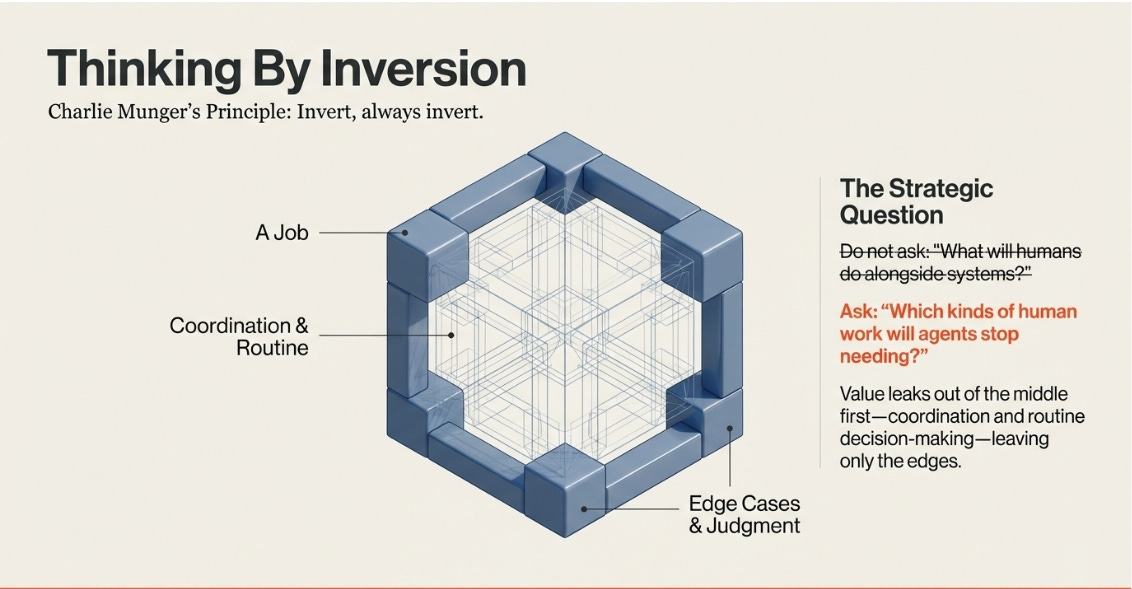

Thinking by Inversion

Charlie Munger often advocated inversion as a way to think clearly. Instead of asking where value will be created, ask where it erodes first.

Applied to an agentic economy, the question is not what humans will do alongside increasingly capable systems. It is which kinds of human work agents will stop needing.

Seen this way, the repricing that follows is not surprising. Value leaks out of coordination and routine decision-making first. What remains valuable are boundary decisions where uncertainty, accountability, and irreversibility concentrate.

How Jobs Are Repriced

As we see initiatives like Rent-a-Human begin to appear, it is hard not to ask a deeper question.

What would an agentic economy look like if agents increasingly dictated the flow of work and humans were engaged only where machines reached their limits.

This is not a prediction of a finished system. It is an attempt to reason about the forces already in motion.

A typical job does not disappear all at once. It decomposes.

As agents absorb coordination, prediction, and execution, the market value of most tasks within a role declines. What remains valuable are boundary decisions where uncertainty, accountability, and irreversibility concentrate.

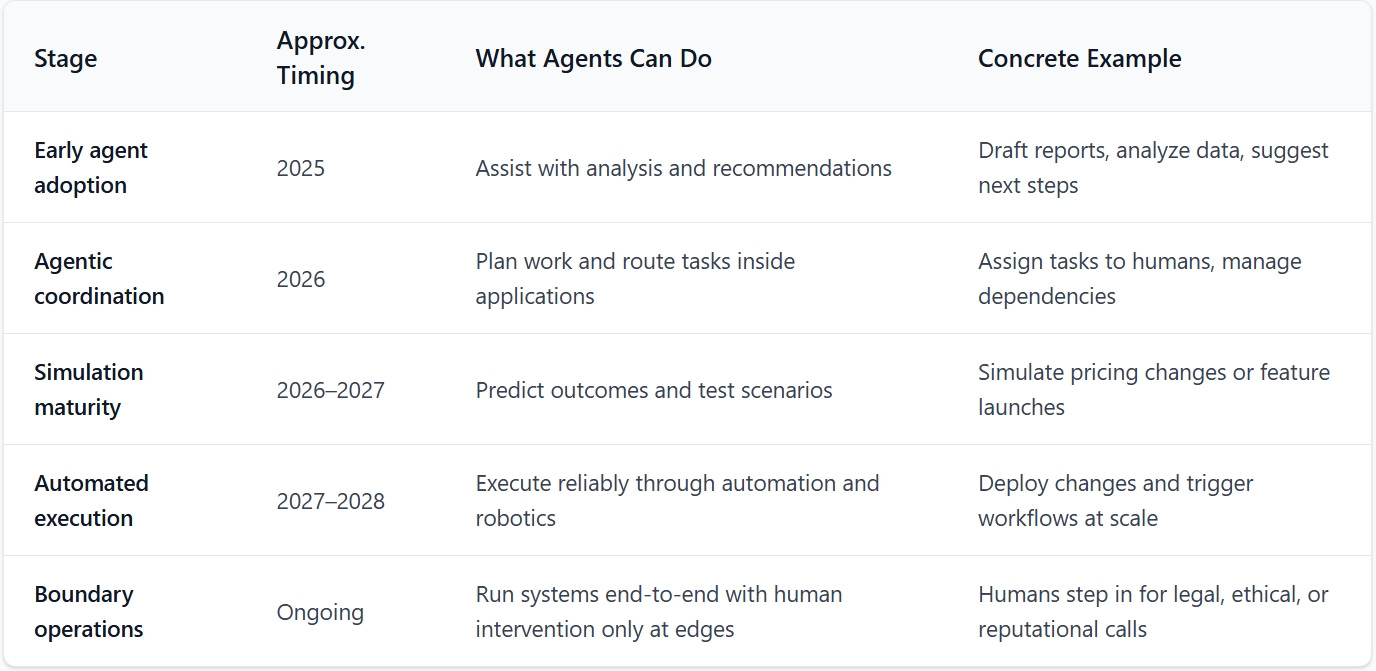

Agent Capability Progression

Gartner projects that by 2026, roughly 40 percent of enterprise applications will feature task-specific AI agents, up from less than 5 percent in 2025. That adoption curve suggests that agentic coordination will move into the core of how work is organized much faster than most institutions are prepared for.

The table below does not describe end states. It outlines capability thresholds that materially change how work is coordinated.

How Human Skills Are Repriced

As agentic capability expands, human value does not move in a single direction. Some skills depreciate quickly. Others appreciate at the same time.

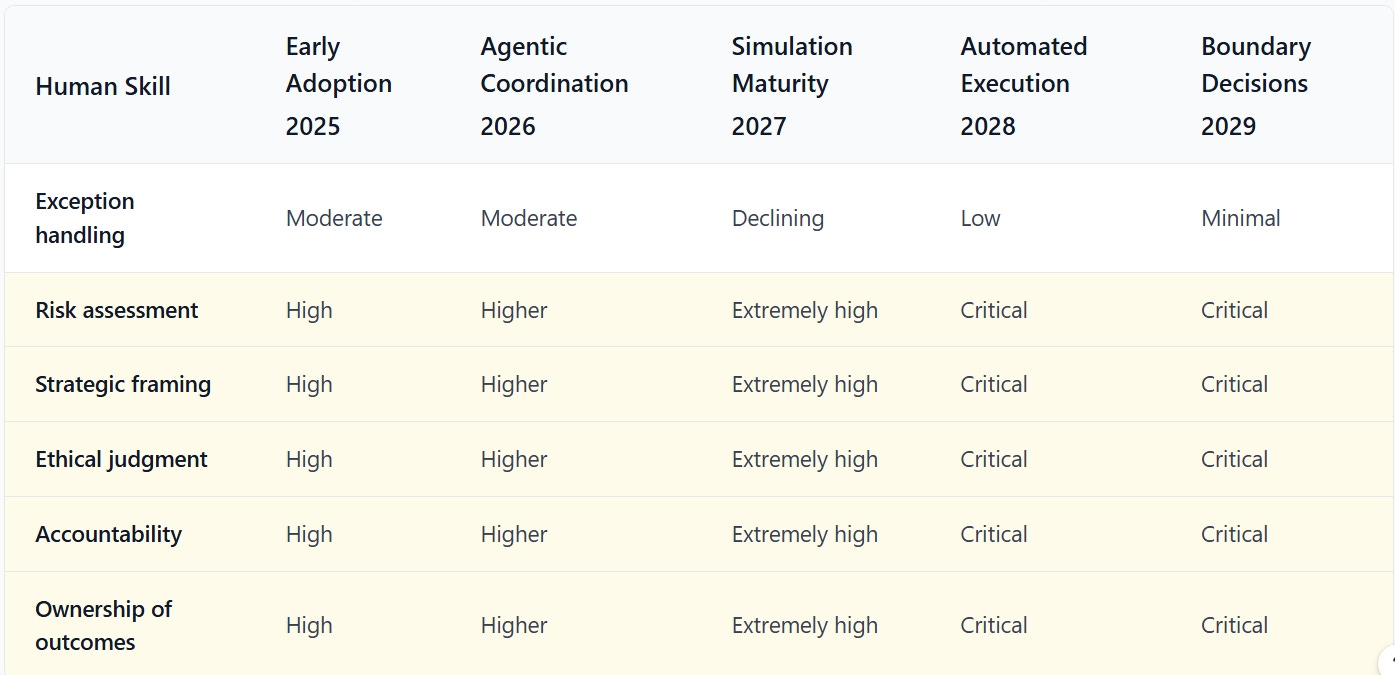

This table shows how different human contributions change value across the same stages.

The important point is not the exact labels. It is the pattern. Coordination and execution lose value as they become cheap and reliable. Judgment, accountability, and ownership appreciate because automated systems execute decisions faster and at greater scale.

How These Skills Translate Inside a Typical Organization

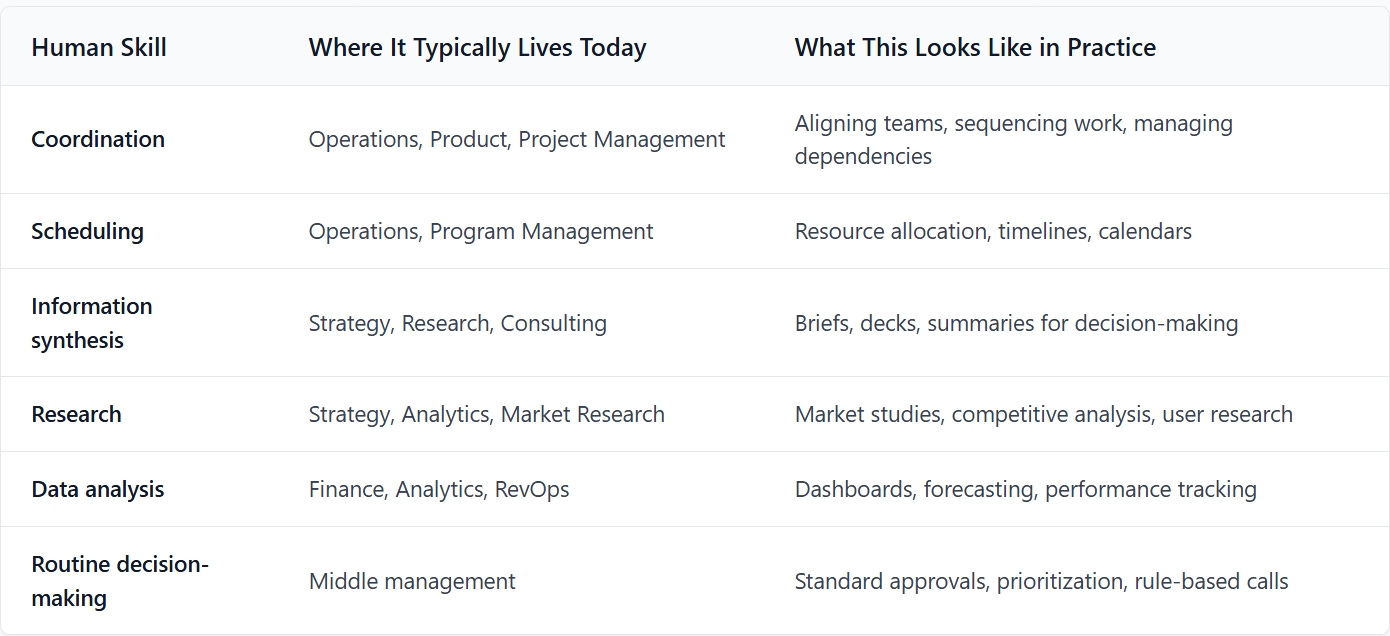

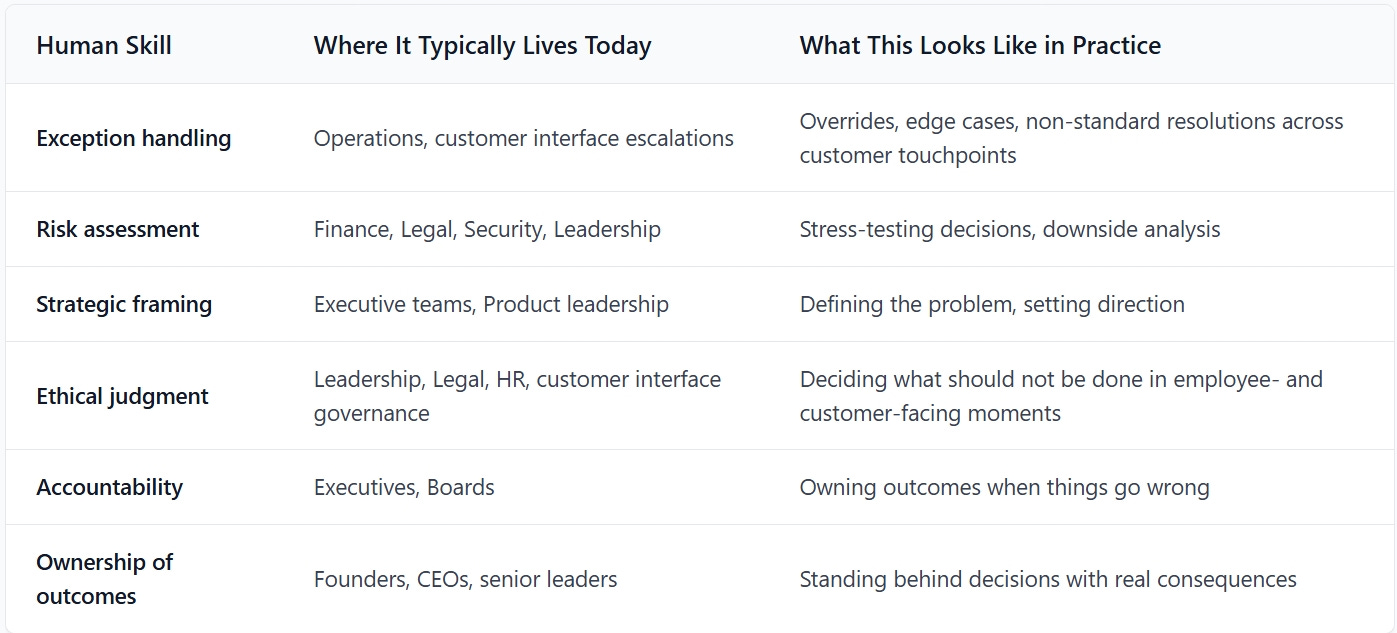

The repricing above happens at the level of skills, but people experience it through roles and departments they already recognize.

This table translates each human skill into where it typically lives inside a company today. It does not predict new job titles. It shows how existing responsibilities change weight as agentic systems mature.

Seen together, these tables show the same shift from different angles: how agent capabilities expand, how human skills are repriced, and how that repricing shows up inside real organizations.

It is important to be precise about what this does and does not imply. Functions like HR, employee relations, and investor relations do not disappear in an agentic economy. They become more consequential. The administrative and coordinative parts of these functions compress as automation scales. What appreciates is the human work that cannot be delegated: explaining difficult decisions, maintaining legitimacy, setting ethical boundaries, and owning outcomes when trust is tested.

As execution becomes cheaper and faster, legitimacy becomes scarcer. That scarcity concentrates human value at the moments where organizations interact with their people, their customers, and their capital providers under uncertainty.

Naval Ravikant: “…in the future…we will all be Uber Drivers.”

The Open Question

The real question now is whether we are still early enough to guide the trajectory of AI in a deliberate way, or whether momentum has already begun to set the direction for us.

In an agent-driven world, the price of your time is set at the edge of what machines cannot yet replace.

That edge is moving quickly.

The developments described here do not signal inevitability. They signal urgency. The choices being made now are shaping defaults that will be difficult to reverse.

JS

LINKS & RESOURCES

Website:

https://juansalasromer.com

Business Health Check: https://juansalasromer.com/diagnostic

Experience to Income Guide: https://juansalasromer.com/monetization

The Vault (Tools, tactics & prompt library)

The Builder’s Store:

https://juansalasromer.com/tools-marketplace

Some reader’s favorites

How Anthropic Set Off a Trillion-Dollar Software Repricing | Agentic AI and the Future of SaaS

AI Agents Are Now Hiring Humans: The Rise of RentAHuman and the Agent Economy

AI Agents Just Built Their Own Social Network. Humans Are Not Allowed to Post.

Build to Thrive | The AI Blueprint | Week of February 2nd, 2026

How I Scaled My Business Without Hiring: Building My First AI Agents for $0

What really landed for me was the idea of repricing rather than replacement. It explains why so many roles feel thinner even when they still exist.

Capability is accelerating faster than governance, judgment, or social norms, which means technology starts shaping decisions and value before humans have fully figured out how to manage it.