Build to Thrive | The AI Blueprint | Week of May 4th, 2026

The Stack Audit. How to save on token use and your return on AI.

Editorial

The Stack Audit

AI tooling costs got expensive this year. Providers raised prices, dropped enterprise discounts, and shifted to consumption-based pricing. CFOs are now reading invoices that used to merge quietly into IT line items. The bill is the conversation now.

I had been watching this happen for months and had not done anything about my own stack.

So in April I sat down and audited my own AI stack the way I would audit a software vendor.

I had let it grow. Roughly thirty scheduled workflows across the week. A COO running the morning checkin. A Chief of Staff orchestrating the daily flow. A research agent scanning operator-relevant sources every morning. A content coach proposing weekly themes. Content agents producing the Blueprint, the article, and the LinkedIn batch. A feedback agent capturing what the audience said back. Plus supporting tasks: a daily reading list, a pre-launch countdown firing every day even though the launch was eight weeks out, recurring market scans firing multiple times a day, and a handful of smaller workflows. Each one seemed reasonable in isolation. None of them had been audited together.

The thirty workflows are not random subscriptions. They make up the flywheel I run as a one-person curator practice: a research layer, a content layer, a feedback layer, a delivery layer, and a Kaizen layer that audits the fleet every Friday. I walked the full architecture in How I Built My AI Chief of Staff. The system works. The April audit was not about tearing it apart. It was about making it run leaner.

The bill kept climbing, getting closer to $300 a month. I could not point to which agent was doing the most work or which was doing the least.

So I did what I would do for a client. I went manual first to understand how the machine actually worked. Listed every scheduled task. Wrote down what each one produced. Wrote down which output I actually read. Crossed out the ones that did not earn their cost. Then I ran the cuts past AI for a second opinion before disabling anything. Manual to see the design, AI to validate the call.

Five changes came out of one morning of work. I disabled the daily operator briefing because the same information was already in my morning check-in. I disabled the daily reading list because the feedback agent was already covering it. I changed the pre-launch countdown from daily to weekly Sundays because it was firing the same output every day for no reason. I disabled a redundant midday scan because the morning and afternoon versions of the same scan already covered it. I added a standing rule that scheduled tasks running unattended must not ask clarifying questions because they were waiting for answers that never came and burning budget while they sat.

Result: about 45 to 50 percent off daily spend. From one morning of work.

The bigger lesson is the one I keep returning to. Most operators audit their software vendors. They negotiate the SaaS bill once a year. They cancel the tools nobody uses. The same discipline applied to an AI stack pays even faster, because the marginal cost of every wasted run is real and immediate, not absorbed into a flat subscription.

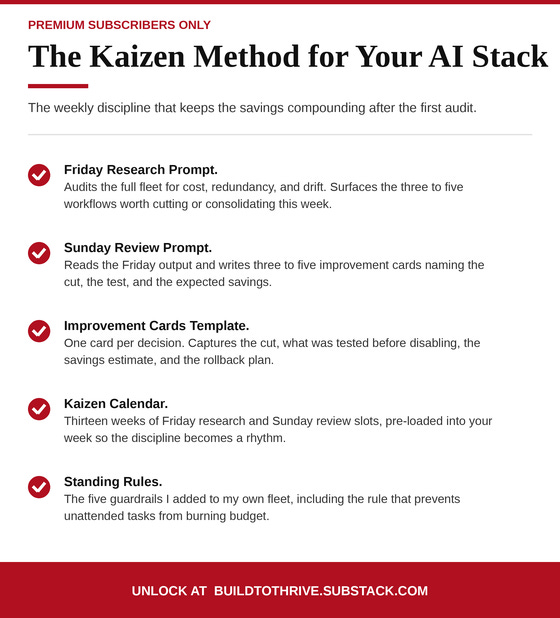

I now run a Kaizen audit on the fleet every Friday. The first audit found the obvious cuts. The audits since then keep finding smaller ones.

The work is not in adding agents. It is in subtracting them.

This issue is the principle and the practice. Three moves to start your own audit. A free tool I built that runs the audit for you in ten minutes if you have an OpenAI or Anthropic account. And three free prompts that cut tokens on day-to-day AI use.

Juan

45 to 50 percent. What I cut from my own daily AI spend in one morning of audit work in April. The cuts came from disabling redundant agents and adding one standing rule that prevented unattended tasks from burning budget on questions nobody answered.

98 percent. Share of organizations now actively managing AI spend, up from 63 percent a year ago, per the State of FinOps 2026 report. The discipline of measuring AI cost has gone from optional to standard practice in twelve months.

3 to 5 times. How much more the AI’s output tokens cost compared to the prompts you send in. The biggest savings come from cutting the length of what you ask for back, not from cutting the prompts you send.

What happened

Anthropic raised Claude Code published economics from $6 to $13 per developer per active day after dropping enterprise volume discounts. Axios reported that Uber’s CTO burned the company’s full 2026 AI budget on token costs early in the year. The pattern is consistent across providers: AI tooling costs are rising, becoming more variable, and arriving as a board-level concern.

What it means

Six months ago the conversation was about adding more AI. Today it is about defending what is already being spent. CFOs and operations leaders are now AI buyers in addition to (and often more urgently than) IT leaders. They are not asking for more capability. They are asking for accountability. The operator who has already done the audit on their own stack has the credibility (and the artifacts) to talk about the discipline that produces it.

What happened

Among the companies that have applied AI cost discipline, the documented savings are large. AT&T cut 90 percent of customer service AI costs by replacing frontier models with fine-tuned smaller ones. Klarna’s AI customer service cost dropped 40 percent in two years, from $0.32 per transaction to $0.19. Operators who save and reuse repeat answers see 50 to 90 percent reduction on those workflows. Smart model routing alone (matching cheap models to easy work, premium to hard) cuts costs 60 to 85 percent.

What it means

The wins are reproducible across stacks of every size. They do not require technical sophistication. They require discipline and a regular audit. My own audit found 45 to 50 percent in a morning. The published case studies confirm the same range across larger companies. The bigger the stack and the longer it has gone unaudited, the bigger the first cut.

If you missed it, my recent piece on how senior operators are charging through AI Micro Consulting names the category that is changing how solo operators package their work in 2026. Forty-eight-hour engagements, fixed scope, 750 to 3,000 dollars per deliverable, five named niches commanding senior specialist pricing. Today’s audit discipline is the operator-side margin story. The Micro Consulting piece is the operator-side revenue story. Pair them and you have the full delivery economics.

Three moves you can run this weekend with no purchase required. Each one saves money the same week you set it up.

Move 1: Set up Concise-Mode in your main AI tool. Most AI conversations open with verbose preambles (”Great question,” “Absolutely,” “Let me walk you through this”), restate your question, and pad outputs with caveats. None of it earns its tokens. Paste a one-time instruction into your Claude Project or ChatGPT custom instructions: “You are a terse senior advisor. No preambles. Direct answer first. Restate nothing. If I need more, I will ask.” Saves 30 to 50 percent on output across every conversation from this point on. Fifteen minutes to set up. Compounds forever.

Move 2: Build your Operator Second Brain. Most readers paste the same context (their style guide, past client work, prompt library, case studies) into AI conversations every time. Stop. Build the knowledge base once and let the AI pull from it on demand. Create a Claude Project called “Operator Second Brain” (or a NotebookLM notebook), upload your top eight reference files, and run all your future AI work inside it. The AI grounds every answer in your real source material instead of guessing from memory. I walked the full setup in my Second Brain piece. Saves 40 to 50 percent on knowledge work. Pays back in better answers, not just lower bills.

Move 3: Match the model to the task. Stop running every AI request on the most expensive model. Pick a cheap model (Haiku, GPT-4o mini, DeepSeek V4-Flash) for routine work: classifications, summaries, lookups, structured extraction, first drafts. Reserve premium models (Opus, GPT-5.5) for the work that genuinely needs deeper reasoning. The cost gap between tiers is roughly five to twenty times. Routing this way frees 50 to 70 percent of your budget for the work that actually needs the premium tier.

Three free prompts you can paste directly into Claude or ChatGPT to start cutting tokens on day-to-day AI use. Each one is drawn from a published pattern operators are using to reduce token spend in 2026. Each links to its full page in the Prompt Library.

The Concise-Mode Setup

A one-time instruction you paste into your Claude Project or ChatGPT custom instructions. Strips preambles (”Great question,” “Absolutely,” “Let me walk you through this”), forces direct answers, and removes the apologies and re-statements that pad every output. Adapted from the open-source claude-token-efficient pattern by drona23 on GitHub. Set once. Applies to every conversation after.

The Conversation Compression

A handoff prompt to run after 15 to 20 messages in any AI chat. Has the AI compress the conversation into a structured handoff (what was accomplished, work in progress, open questions, next actions) you paste into a fresh chat. Avoids the quadratic token growth that kills long sessions. Adapted from the published context compaction patterns in the Microsoft Agent Framework and Mario Zechner’s Context Compaction Research survey.

The Model-Routing Recommender

Reads a task you are about to send to an AI and recommends the cheapest model tier that can handle it, plus the leanest prompt, plus the right output budget. Routing routine work to a smaller model (Haiku, GPT-4o mini, DeepSeek V4) frees 50 to 70 percent of your budget for the work that actually needs the premium tier. Built on the operator routing tables from Ian Paterson’s 15-LLMs-on-38-tasks benchmark and the TokenMix.ai 2026 cost math.

Token Savings Copilot. The audit I described above, productized so anyone can run it in ten minutes. Free. Privacy-first: your data never leaves your browser. Drop your OpenAI or Anthropic CSV export and the tool surfaces the specific waste alerts (over-context calls, premium models on routine tasks, high-frequency calls eligible for caching) and generates a personalized five-step weekend savings plan you can download as a text file.

If you use AI through consumer subscriptions (ChatGPT Plus, Claude.ai) and do not have CSV exports, the three prompts above run the same audit manually.

Six items to keep this honest. After your weekend, run through this list. If you can check all six, the savings start compounding from here.

☐ I have set up Concise-Mode in my main AI tool.

☐ I have created my Operator Second Brain with at least eight reference files.

☐ I have mapped my three most common AI tasks to the right model tier.

☐ I have switched at least one premium-model task to a cheaper model.

☐ I have tested the changes against a real workflow.

☐ I have scheduled a recurring weekly review on my calendar.

Two ways to take what’s in this issue and turn it into your own move this week.

Founder 100, $99. The flagship program for senior operators packaging their experience into a sellable practice. Includes every digital asset Build to Thrive ships and a positioning intensive: two 1:1 sessions where your offer, your delivery economics, and your audit discipline get pressure-tested against your specific situation. The sessions are not lectures. We work through your positioning and your delivery economics together against what the research and the operator case studies indicate works. Your conclusion, defended. Join at learn.buildtothrive.co/founder-100.

1:1 AI Micro Consulting Setup. A focused 1:1 working session where I help you set up your own AI Micro Consulting practice end to end: the offer definition, the 48-hour delivery model, the pricing logic, the buyer profile, and the audit discipline that protects your margin once the work starts coming in. For senior operators who already know they want to package their experience this way and want help making the call faster. Book at learn.buildtothrive.co/1-on-1.